Using GPUs on Nebari

Introduction

Overview of using GPUs on Nebari including server setup, environment setup, and validation.

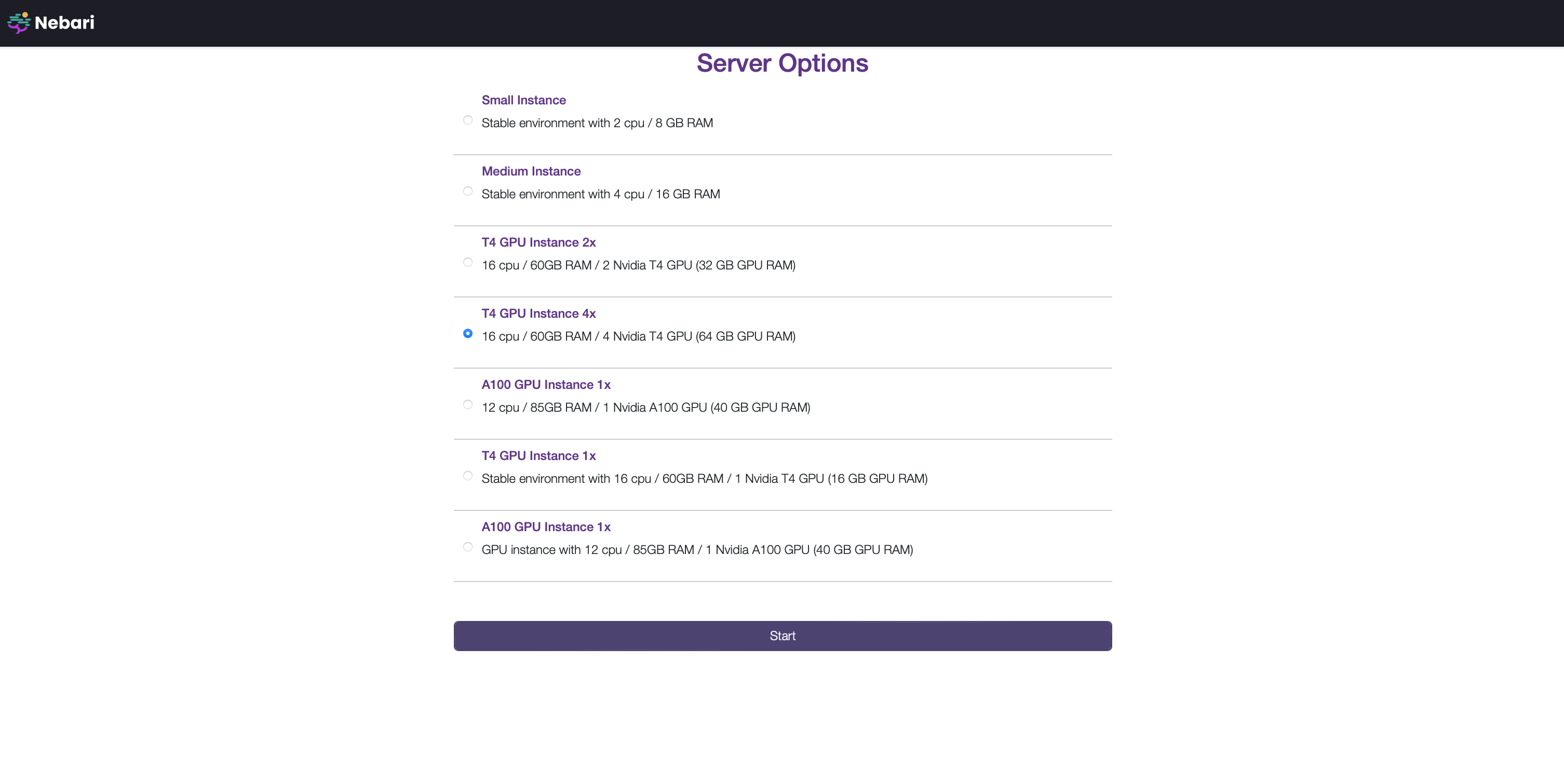

1. Starting a GPU server

Follow Steps 1 to 3 in the [Authenticate and launch JupyterLab][login-with-keycloak] tutorial. The UI will show a list of profiles (a.k.a, instances, servers, or machines).

Your administrator pre-configures these options, as described in [Profile Configuration documentation][profile-configuration].

Your administrator pre-configures these options, as described in [Profile Configuration documentation][profile-configuration].

Select an appropriate GPU Instance and click "Start".

Understanding GPU setup on the server.

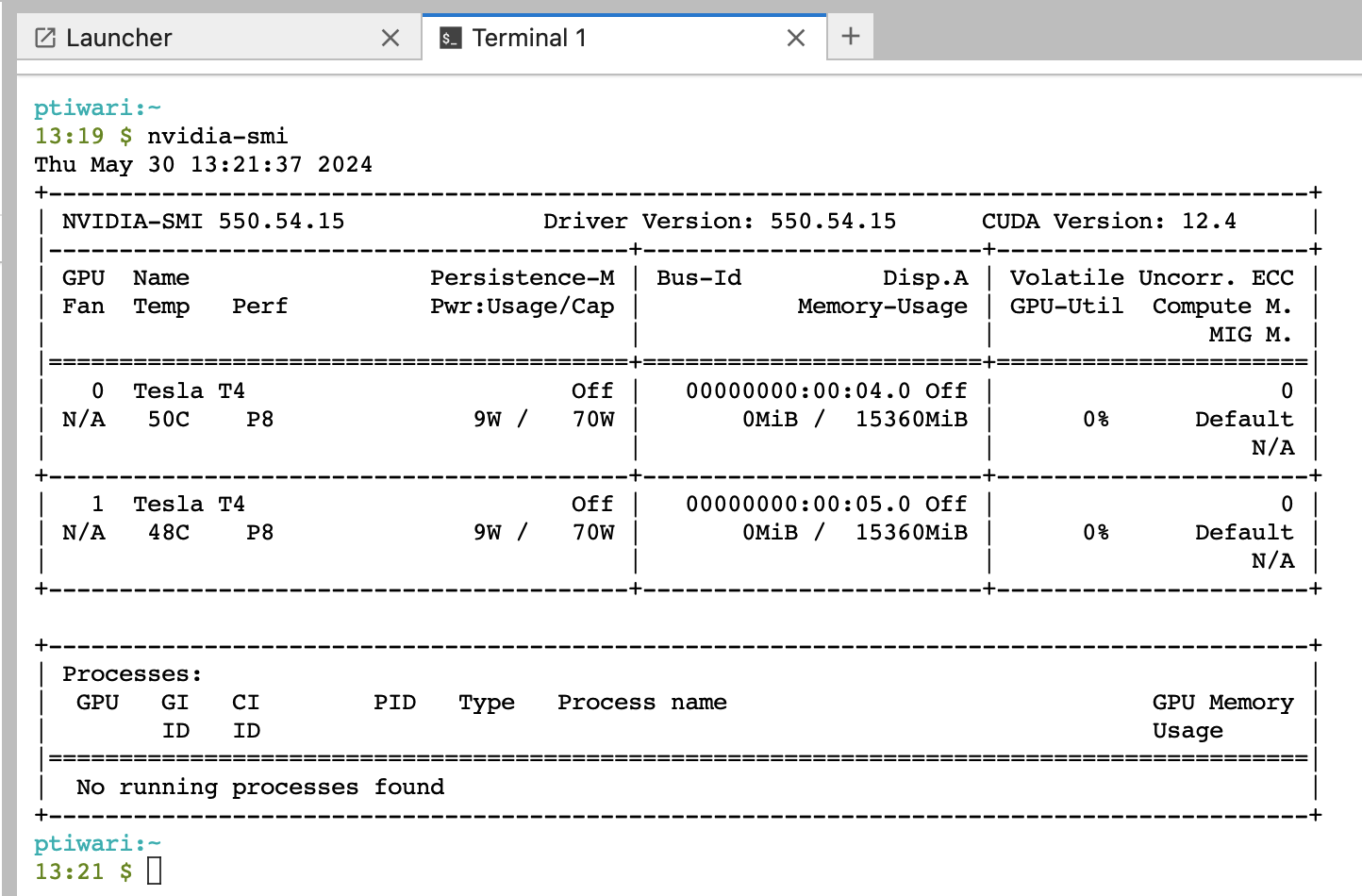

The following steps describe how to get CUDA-related information from the server.

- Once your server starts, it will redirect you to a JupyterLab home page.

- Click on the "Terminal" icon.

- Run the command

nvidia-smi. The top right corner of the command's output should have the highest supported driver.

If you get the error nvidia-smi: command not found, you are most likely on a non-GPU server. Shutdown your server, and start up a GPU-enabled server.

Compatible environments for this server must contain CUDA versions below the GPU server version. For example, the server in this case is on 12.4. All environments used on this server must contain packages build with CUDA<=12.4.

2. Creating environments

By default, conda-store will build CPU-compatible packages. To build GPU-compatible packages, we do the following.

Build a GPU-compatible environment

By default, conda-store will build CPU-compatible packages. To build GPU-compatible packages, we have two options:

- Create the environment specification using

CONDA_OVERRIDE_CUDA(recommended approach):

Conda-store provides an alternate mechanism to enable GPU environments via the setting of an environment variable as explained in the conda-store docs.

While creating a new config, click on the **GUI <-> YAML** Toggle to edit yaml config.

channels:

- pytorch

- conda-forge

dependencies:

- pytorch

- ipykernel

variables:

CONDA_OVERRIDE_CUDA: "12.1"

Alternatively, you can configure the same config using the UI.

Add the CONDA_OVERRIDE_CUDA override to the variables section to tell conda-store to build a GPU-compatible environment.

At the time of writing this document, the latest CUDA version was showing as 12.1. Please follow the steps below to determine the latest override value for the CONDA_OVERRIDE_CUDA environment variable.

Please ensure that your choice from PyTorch documentation is not greater than the highest supported version in the nvidia-smi output (captured above).

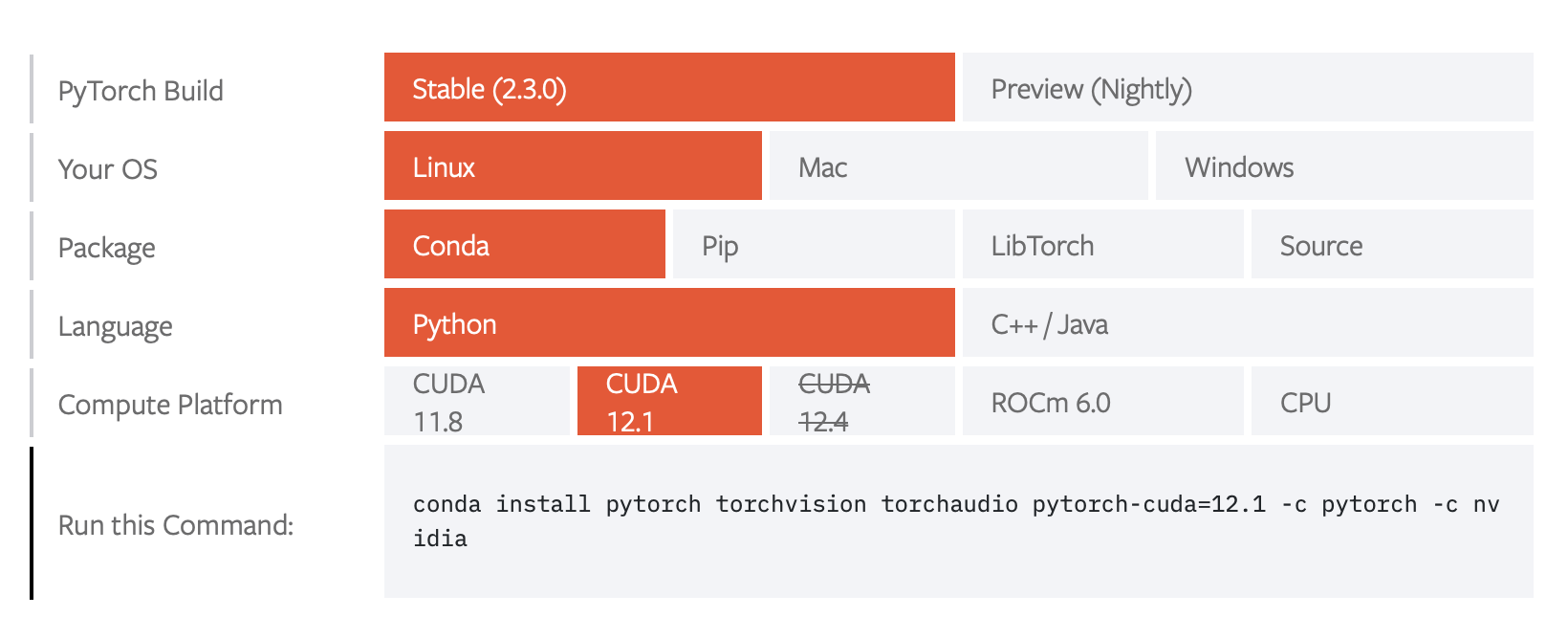

- Create the environment specification based on recommendations from the PyTorch documentation:

You can check PyTorch documentation to get a quick list of the necessary CUDA-specific packages.

Select the following options to get the latest CUDA version:

- PyTorch Build = Stable

- Your OS = Linux

- Package = Conda

- Language = Python

- Compute Platform = 12.1 (Select the version that is less than or equal to the

nvidia-smioutput (see above) on your server)

The command conda install from above is:

conda install pytorch torchvision torchaudio pytorch-cuda=12.1 -c pytorch -c nvidia

The corresponding yaml config would be:

channels:

- pytorch

- nvidia

- conda-forge

dependencies:

- pytorch

- pytorch-cuda==12.1

- torchvision

- torchaudio

- ipykernel

variables: {}

The order of the channels is respected by conda, so keep pytorch at the top, then nvidia, then conda-forge.

You can use **GUI <-> YAML** Toggle to edit the config.

3. Validating the setup

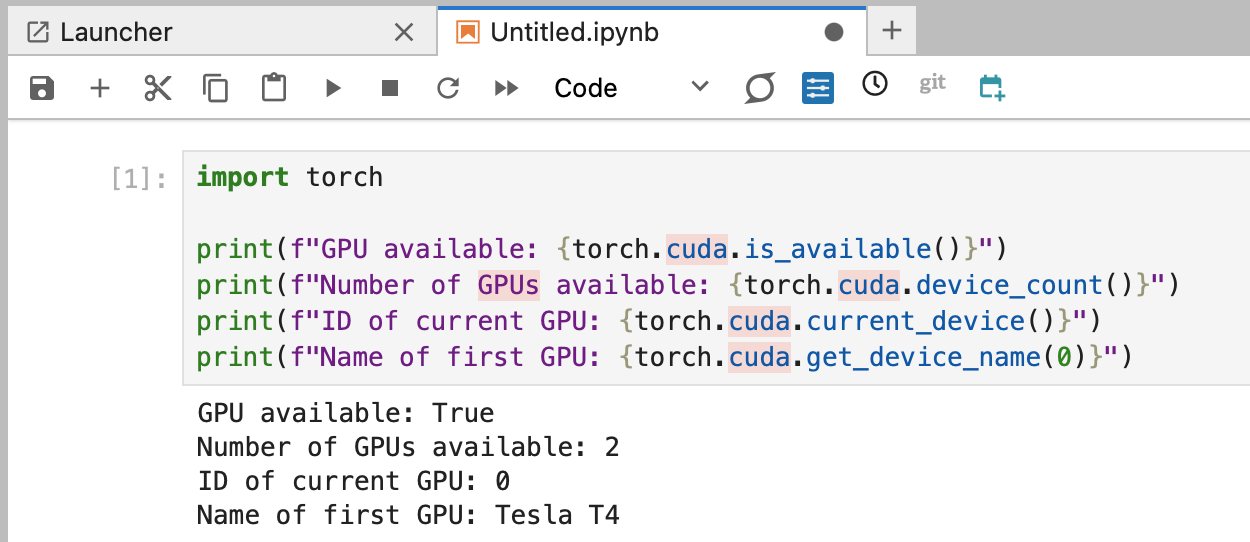

You can check that your GPU server is compatible with your conda environment by opening a Jupyter Notebook, loading the environment, and running the following code:

import torch

print(f"GPU available: {torch.cuda.is_available()}")

print(f"Number of GPUs available: {torch.cuda.device_count()}")

print(f"ID of current GPU: {torch.cuda.current_device()}")

print(f"Name of first GPU: {torch.cuda.get_device_name(0)}")

Your output should look something like this: